Sharing a YouTube video to a PWA for background listening

How do you define the act of sharing something towards an application? We'll discover it in the process of creating a YouTube background audio listener PWA.

Link to the repo for the lazy ones :)

If there is one thing (if only it were one) that keeps always coming back again and again in my life as an app developer, is the following equivocal difference:

- Sharing something from the app I’m developing: I press a button in the app to share a message in Telegram, for example.

- Want the app I’m developing to receive something that the user will share: I’m on YouTube and I hit the share button and I want my app to appear in the sharing panel that got opened alongside many other apps.

It’s actually quite difficult even finding the right name to describe scenario number 2, and naming is important since, eventually, you’ll want to look up how to do that in both native and web apps, and you get frustrated very easily.

I’ve acknowledged this being used to develop react native apps where scenario number 2 was, once, a bit cumbersome to implement. (like any other time where a native module installation was involved before the auto-linking feature arrived).

This whole post, and the discoveries I made within the universe of the PWAs, come from a problem I tried to solve. I wanted to try and see if it was possible to listen to youtube audios (the audio stream of the videos, that is) in the background on my smartphone and I wanted to develop something in the smartest and fastest possible way.

Today, in 2022, for a react developer, to create an app in the fastest possible way, you can’t go wrong with next.js, especially considering their API routes feature (if you don’t know it, go and check it out).

The sharing target

It might have been obvious for native English speakers, but in the developer world, in scenario number 2, the app you’re developing that you want to appear in the sharing panel is called a “share target”.

So you can search about it with keywords such as “react-native app share target”, or “PWA share target”.

How do I know that? Because MDN standardized a way for PWAs to behave as a share target with the (guess what?) web share target API.

Also, the android documentation refers to it in the same way whereas in iOS you need to define a “share extension” (this is because in Xcode you have extensions that are used for notifications and all sorts of other stuff).

youtube-background-pwa

Here is the link to the repo I put up with a next.js app deployed on Vercel.

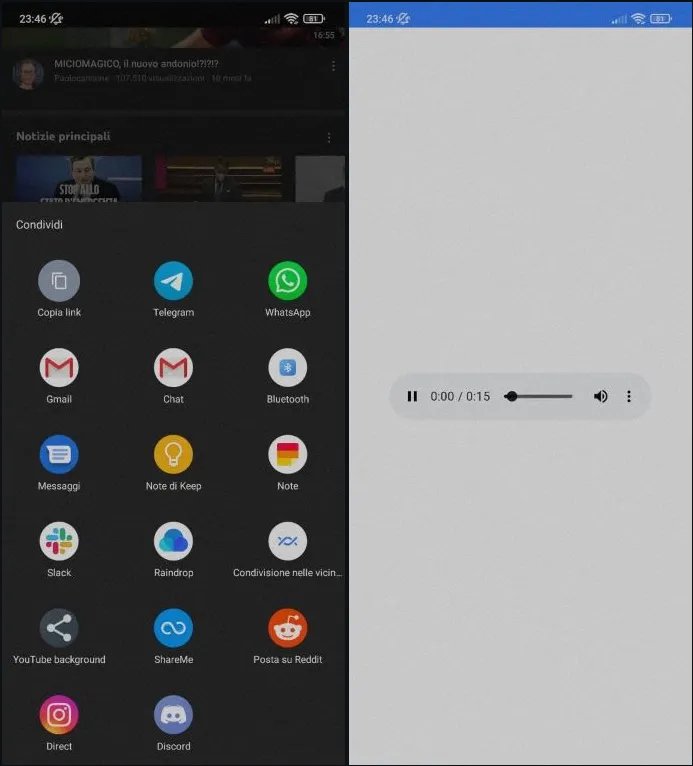

Here are some screenshots of how the app is supposed to work. You basically go within the YouTube app (or the website, it doesn’t really matter), hit the share button for the video you’d like to listen to in the background, and then you’ll have an HTML audio player waiting for you.

All this was done in a couple of hours (the thing that took me the most was to figure out why sometimes the google CDN was returning 403. Read more about this below).

Limitations with the hoster

The code works, and if you want to host it by yourself, you can freely do it and it will work awesomely.

But the problem you will experience using the URL of the deployed app on Vercel is that videos longer than 1 minute (give or take) will not let you hit the play button and my api response will timeout.

Why? Because ytdl-core, the package I used to extract the direct URL of a YouTube video, has written in their readme that the IP that requests the direct URL must be the same IP that will eventually use it. Of course, ytdl-core is a node.js module that can’t be used in the browser, meaning that the process of extraction of the URL is done on Vercel within the /api/stream endpoint of my app, and then the client can’t directly use that URL since the IP of the client would be different and in doing so the request would return a 403*.

So the solution was to let the endpoint act as a transparent proxy that directly returns a node stream to the client. This would mean using the hoster bandwidth and Vercel doesn’t like this (we currently have a 5MB limit) and here is the why of the limitation of the video length. The node code looks like this:

const ytdl = require("ytdl-core");

export default async function handler(req, res) {

const { link } = req.query;

if (!link) return res.status(400).json({ error: "There is no link bro" });

try {

ytdl(link, {

filter: function (format) {

return !format.hasVideo;

},

quality: "highest",

}).pipe(res);

} catch (e) {

console.log(e);

res.status(500).json({ error: "Something went wrong" });

}

}

where you basically pipe in the response the chunks of audio you’re reading.

* This is actually a very well-known limitation in tools such as ytdl-core or youtube-dl (which is the more famous version written in python). Here you can find many links talking about it. You also have this StackOverflow thread.

For a more robust solution, check regularly my GitHub profile since I’m releasing a React Native app to do the same, but without reaching for any API or server outside of the google ones.

That’s all for now folks 👋🏻👋🏻😁